Blog2/16/2026

AI Voice Cloning Scams: 5 Terrifying Realities of the Vishing Revolution

5 min read Read

The Briefing

Quick takeaways for the curious

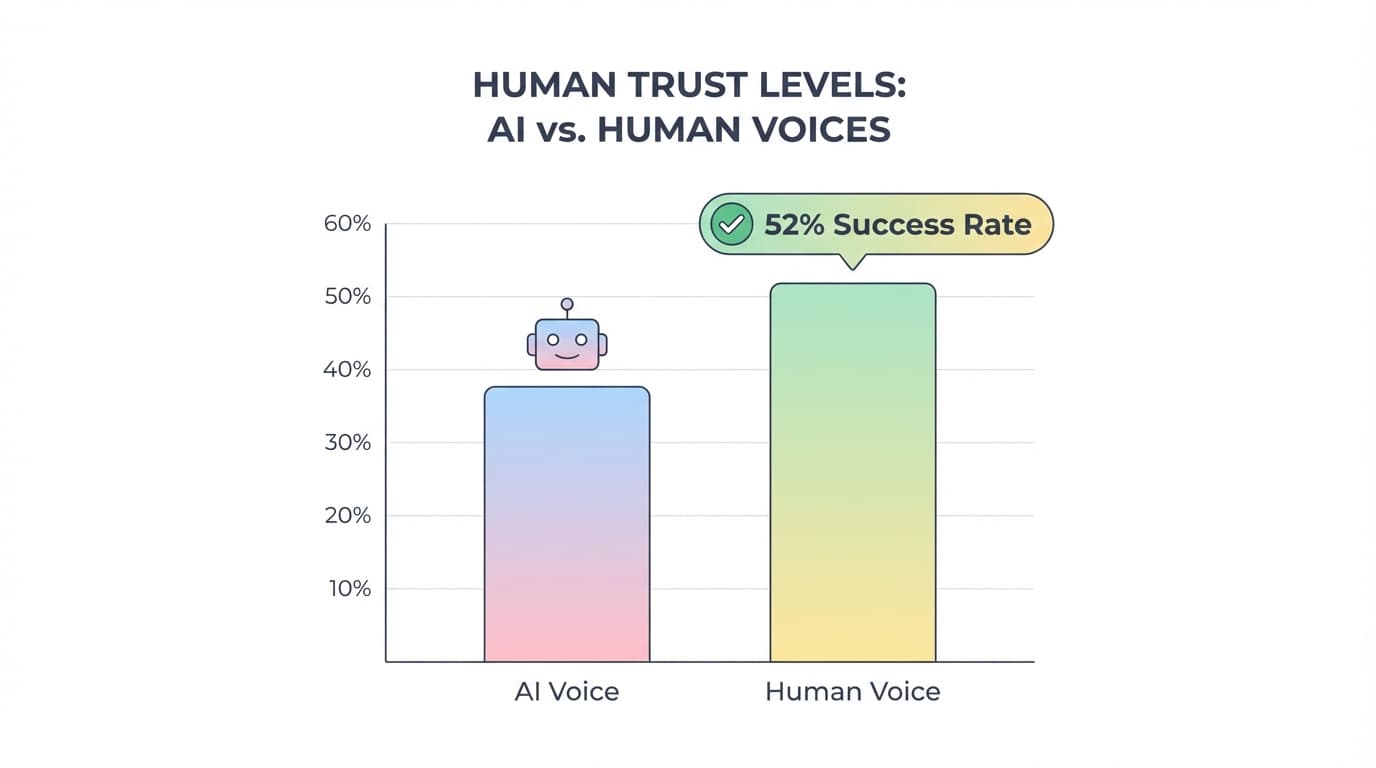

The 'ViKing' study reveals a 52% success rate for AI vishing bots, even against warned users.

AI voice cloning attacks are incredibly cheap, costing approximately $0.59 per call.

Flash calls are causing massive network congestion and a projected $40 billion loss in A2P revenue.

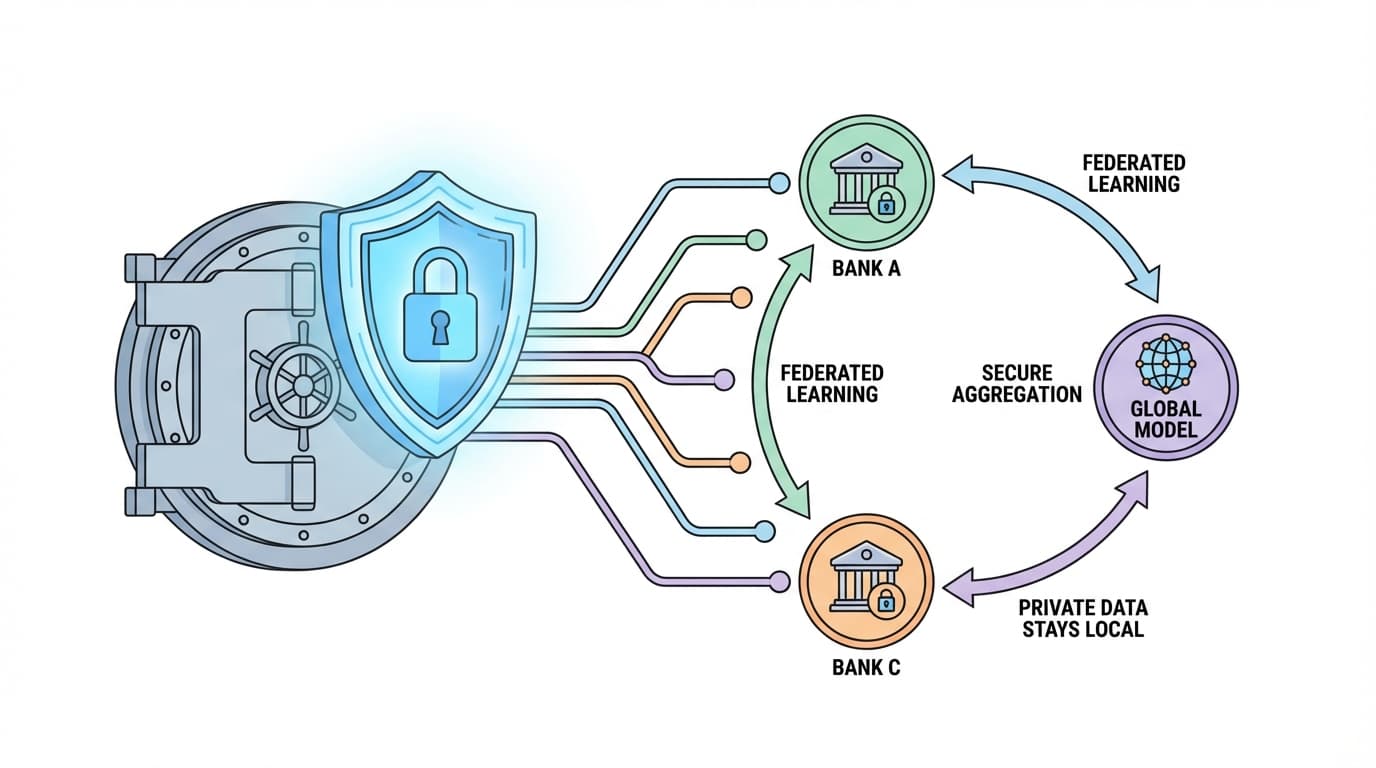

Federated Learning allows banks to share fraud patterns without exposing sensitive customer data.

A pre-agreed 'Safe Word' is currently the most effective defense against AI voice cloning.

We are entering an era where the human ear is no longer a reliable security layer. For decades, the fundamental architecture of our social and digital safety was built on "biological trust"—the simple, intuitive belief that if a voice sounds like your boss, your spouse, or your bank's fraud department, it must be them. AI has turned that trust into a catastrophic liability. With the rise of the "30-second audio" vulnerability, where a mere half-minute of video or social media audio is enough to create a perfect digital clone, our identities are being stripped from our control.

This is the vishing (voice phishing) revolution. It is no longer just about suspicious calls from distant lands; it is about an automated, scalable, and terrifyingly cheap assault on human psychology. Here is how the landscape of trust is being dismantled, one $0.59 call at a time.

1. The "ViKing" Reality Check: Why Warnings Aren't Enough

Security training often relies on the idea that an informed user is a safe user. Recent research into the "ViKing" system—an AI-powered vishing tool built entirely from commodity, off-the-shelf technology like GPT-4, ElevenLabs, and Twilio—proves this is a dangerous fantasy. In a controlled study of 240 participants, a staggering 52% handed over sensitive data, including Social Security Numbers and passwords, to a bot .

The irony of the findings is sobering: even among those who were "most strongly cautioned"—explicitly warned about social engineering and corporate protocol—33% still fell for the bot. Why? Because the AI didn't just mimic a voice; it provided a "better" customer experience than most humans. The research showed that 46.25% found the AI highly credible, and 68.33% perceived the interaction as realistic. Participants specifically noted that female AI voices were perceived as more natural and trustworthy. This isn't just a technical bypass; it’s a psychological one.

As the study’s abstract warns:

"Vishing is a particularly serious threat as it bypasses security controls designed to protect information."

2. The Economics of Deception: A Successful Attack for the Price of a Coffee

The most chilling aspect of the AI vishing revolution is its ruthless efficiency. AI has transitioned vishing from a boutique, human-intensive operation into a scalable commodity. Research into the ViKing system revealed that the operating cost for a single vishing call is approximately $0.59. When you factor in the success rates, the cost of a "successful" heist—one that actually yields a password or a Social Security Number—ranges between just $0.50 and $1.16.

At these prices, attackers no longer need to be precise; they simply need to be persistent. Organized crime can now automate thousands of calls simultaneously, meaning they can achieve massive returns even with a low "hit rate." When a successful identity theft costs less than a cup of coffee to execute, the barrier to entry for cybercrime effectively disappears.

3. The Invisible War: "Flash Calls" and the Signaling Crisis

While we worry about cloned voices, a silent war is being waged through "Flash Calls"—ultra-short calls lasting under two seconds. These are increasingly used for authentication; an app triggers a call to your device , and the mere appearance of the call serves as proof of your identity. For the user, it’s a convenient, seamless experience. For the mobile network, it’s a parasitic drain.

Scammers use these calls to bypass monetized A2P (Application-to-Person) channels—the traditional text message routes businesses use to reach customers. This "signaling overhead" creates spam-like bursts that congest networks and degrade service quality. The stakes are massive: the industry projects that A2P text revenue will lose $40 billion by 2027 due to this cannibalization. What the consumer sees as a convenience, the operator sees as a total compromise of the network's financial and technical integrity.

4. The Collaborative Shield: Creating an Immune System for Banks

As the threat scales, the defense must become dynamic. In South Korea, a landmark joint project involving six major institutions, including Kbank and Toss Bank, is pioneering a "Federated Learning" model to protect consumers—specifically targeting older adults who are disproportionately victimized.

Federated Learning allows these banks to train a shared AI model on real-life fraud cases without ever sharing raw, sensitive customer data with one another. Think of it as a neighborhood watch where every house shares the description of a suspicious intruder without ever handing over their own house keys . This shift from static, rule-based filtering to a predictive "Voice Firewall" allows the system to identify fraud patterns across the entire sector in real-time.

As FSI CEO Park Sang-won notes:

“True innovation and competitive advantage can only be achieved on the foundation of strong security.”

5. The Low-Tech Firewall: The Irony of the "Safe Word"

There is a profound irony in the fact that in an era of multi-billion parameter Large Language Models and deepfakes, the ultimate firewall is a single word shared over a dinner table. The National Cybersecurity Alliance now recommends that the best defense against a voice clone is a "Safe Word"—a pre-shared secret between family members or coworkers used to verify identity during an "urgent" call.

To implement this biological firewall, follow these protocols:

- Make it unique: Avoid birthdays or pet names that can be scraped from social media.

- Keep it private: Never share it digitally; speak it in person.

- Segment your secrets: Use different words for family than you do for close colleagues.

- Practice: Test it occasionally so that using it becomes a reflex under pressure.

Conclusion: Beyond Detection

The ViKing study showed us that AI is currently flawed—it sometimes cuts people off or struggles with natural cadence—but those gaps are closing fast. As we move from a world where "seeing is believing" to one where "verifying is surviving," we must realize that no technical filter is 100% effective.

Our safety now depends on a hybrid defense: leveraging the power of Federated Learning and AI firewalls at the macro level, while maintaining a disciplined, low-tech skepticism at the personal level. We must learn to verify the human, not the voice .

In a world where your voice can be cloned in seconds, how much of your digital identity is built on a foundation you can no longer protect?

Common Questions

🤔

Frequently Asked Questions

What is AI vishing?▼

AI vishing (voice phishing) is a cyberattack where scammers use Artificial Intelligence to clone a person's voice to trick victims into revealing sensitive information or sending money.

How much does an AI scam call cost to execute?▼

According to the ViKing study, the operating cost for a single AI-powered vishing call is approximately $0.59, making it a highly scalable tool for criminals.

What is the best defense against AI voice cloning?▼

The National Cybersecurity Alliance recommends using a 'Safe Word'—a secret word shared offline between family members—to verify identity during suspicious or urgent calls.