Blog2/16/2026

RIP Prompt Engineering: Why 2026 is the Year AI Agents Take Over

6 minutes Read

The Briefing

Quick takeaways for the curious

The shift from 'Read-Only' Chatbots to 'Read-Write' Agents allows AI to execute tasks rather than just summarizing text.

The ReAct (Reasoning + Acting) framework reduces hallucinations by grounding AI reasoning in external tools and APIs.

Multi-Agent crews, where specialized agents collaborate (e.g., Planner, Writer, Safeguard), significantly outperform monolithic models.

Reflexion allows agents to learn from 'verbal reinforcement' and self-correction, bypassing expensive traditional training methods.

Governance and 'Human-in-the-Loop' remain critical as agents gain the ability to modify live production systems.

The Death of the Prompt: Why 2026 is the Year AI Stops Talking and Starts Executing

Introduction: The Great Shift from Conversation to Action

The technology landscape of 2025 was defined by "chatbot fatigue." While the previous year brought Large Language Models (LLMs) into the mainstream, users quickly slammed into the ceiling of conversational AI: systems that could talk endlessly but lacked the agency to actually do anything. As we move into 2026, we are witnessing a tectonic shift in the orchestration layer of enterprise technology.

We are moving away from "Read-Only" AI—reactive systems that merely process and summarize information—and toward "Read-Write" AI. This is the era of the AI Agent, where models stop suggesting and start executing. In 2026, the competitive advantage won’t go to the team with the best prompt engineers, but to those who can build autonomous workers capable of navigating our digital world.

From "Read-Only" to "Read-Write" Intelligence

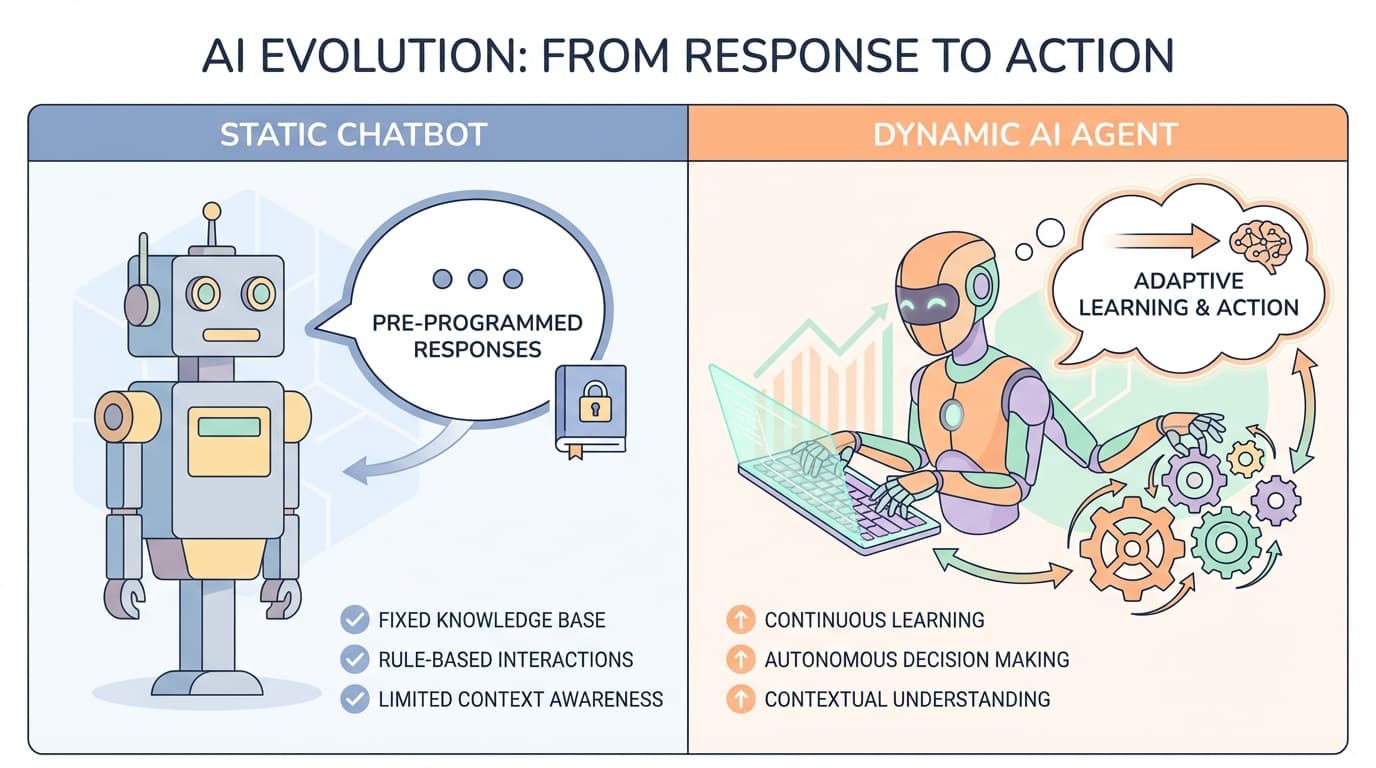

The core distinction between 2025’s chatbots and 2026’s agents lies in their relationship with the environment. A chatbot waits for a prompt; an agent identifies an objective and pursues it proactively.

The "secret sauce" of the Read-Write era is Tool Integration. This allows the LLM to escape the confines of its "frozen" training data. By interfacing with live databases and software through API calls, the model transforms from a static library into a dynamic operator. It no longer just describes a cost-saving strategy; it logs into your cloud console and implements it.

Key Differences

- Initiative: Chatbots are reactive; Agents are proactive.

- Memory: Chatbots are stateless; Agents utilize contextual, long-term memory.

- Execution: Chatbots suggest; Agents take direct action on systems.

- Risk: Agents carry higher production risks due to system modification capabilities.

Why 2026 is the Year of "Agentic" Infrastructure

The breakthrough of 2026 is not an accident of research, but a convergence of infrastructure maturity and industry momentum. As highlighted at CES 2026, "agentic AI" has moved from the laboratory to the production environment. Major platforms like AWS Bedrock Agents, Azure AI Studio, and Google Vertex AI Agent Builder now provide the stability required for enterprise-grade deployment .

The market reality matches the hype. Research indicates the AI Agent market is projected to soar from $8 billion in 2025 to $48.3 billion by 2030. However, the pivot to agents requires a new strategic philosophy: success depends on deploying the right agents for the right problems with the right guardrails.

Synergizing Reasoning and Acting (The ReAct Model)

To move from talk to action, agents utilize the ReAct (Reasoning + Acting) framework. While earlier models relied on "Chain-of-Thought" (CoT) prompting, CoT is notoriously susceptible to fact hallucination because it lacks a tether to the physical or digital world.

ReAct solves this by interleaving "verbal reasoning traces" with task-specific actions. For example, when tasked with a multi-hop question, the agent uses the Wikipedia API to "ground" its reasoning in external facts before taking the next step. This creates a feedback loop: the agent reasons about what it knows, acts to fill its knowledge gaps, and updates its plan based on what it finds.

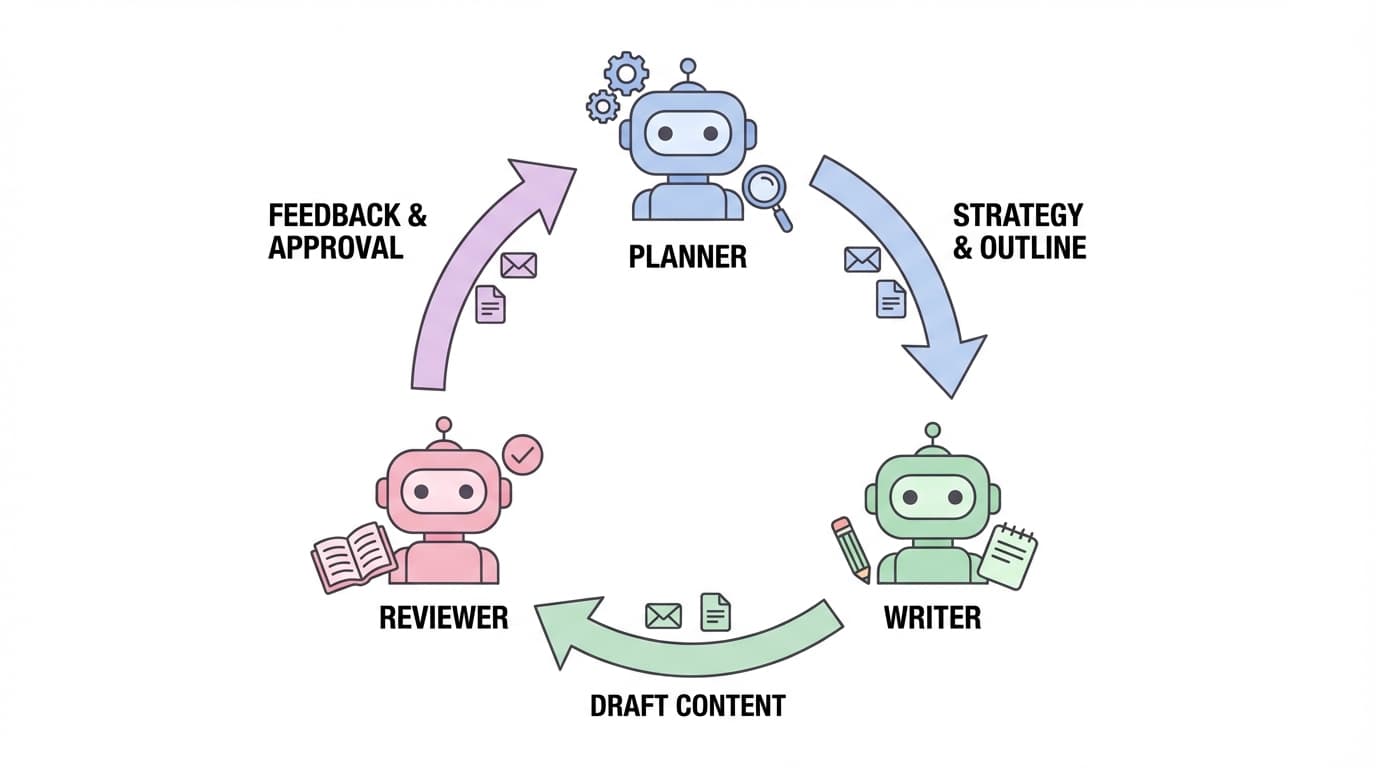

The Rise of the "Multi-Agent" Crew

The era of the "Lone Ranger" AI is over. We are seeing a 300 percent year-over-year growth in multi-agent support across open-source communities because specialized "crews" are inherently more stable than single, monolithic models. By decomposing a complex task into roles—such as a Planner, a Writer, and a Safeguard—organizations can achieve massive efficiency gains.

The data supports this shift: Microsoft's AutoGen implementations have shown the ability to reduce development code from over 430 lines to just 100 lines. Furthermore, multi-agent configurations have boosted the detection of unsafe code by up to 35%. When agents provide feedback to one another in a "dynamic group chat," the manual burden on the human developer evaporates.

"Verbal Reinforcement" and the Power of Self-Reflection

Traditional Reinforcement Learning (RL) is computationally expensive and slow. The Reflexion framework bypasses this by using "verbal reinforcement." Instead of updating model weights based on a simple scalar reward, the agent learns from linguistic feedback.

The Reflexion architecture consists of an Actor, an Evaluator, and Self-Reflection. The Evaluator provides a "semantic gradient signal"—a nuanced textual summary of what went wrong—which is then stored in the agent's memory. This approach has led to a staggering 97% success rate in ALFWorld decision-making tasks, proving that agents can effectively "coach" themselves to perfection.

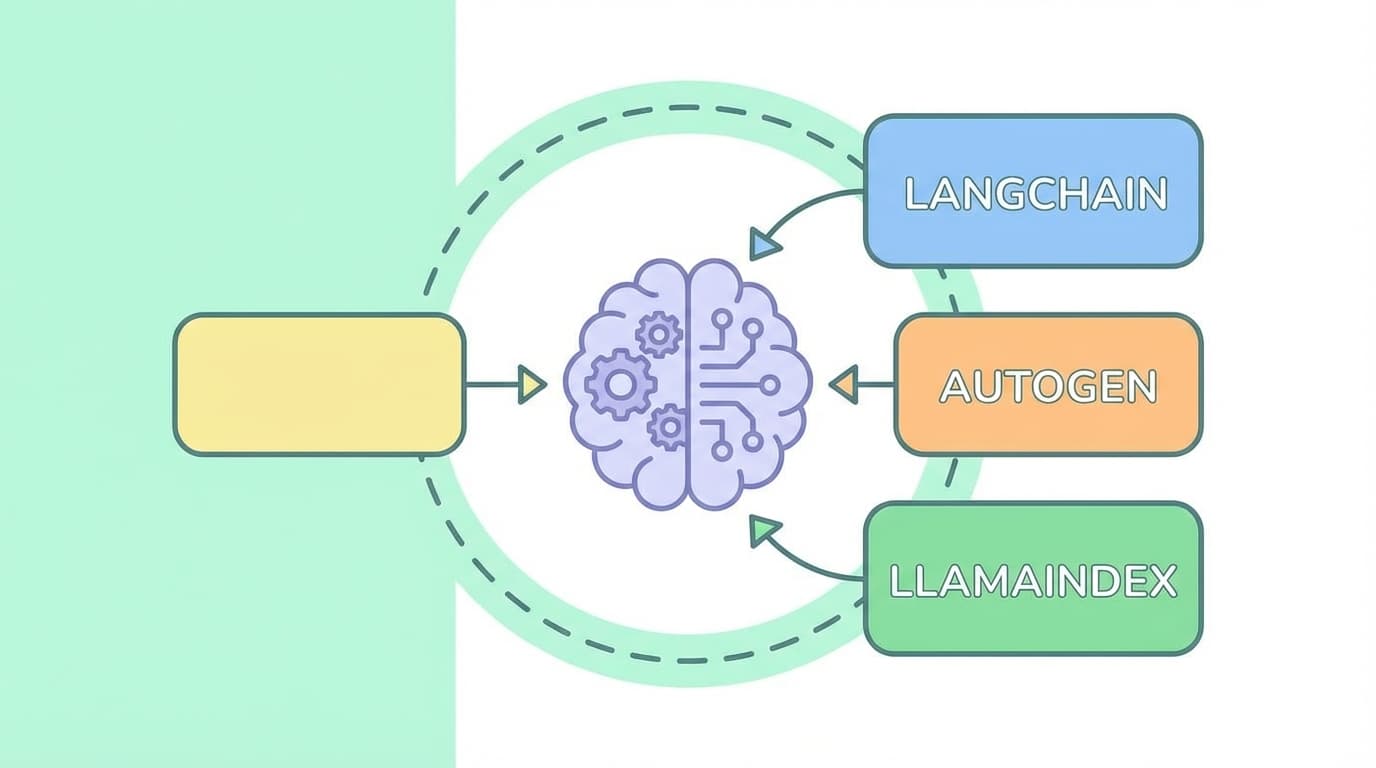

Picking Your 2026 Toolkit

Choosing a framework is now a fundamental architectural decision. The 2026 landscape has segmented into specialized tools:

- LangChain: Still the gold standard for general-purpose flexibility .

- LangGraph: The primary choice for compliance and reporting—best for reliable, stateful workflows.

- AutoGen: The powerhouse for the Microsoft ecosystem, optimized for scaling complex multi-agent collaboration.

- LlamaIndex: The leader for data-heavy retrieval tasks, particularly in healthcare and finance.

Simultaneously, low-code platforms like Langflow and N8N are democratizing these capabilities, allowing product teams to prototype autonomous workflows without deep engineering overhead .

The Reality Check: Trust and Governance

Despite the $48 billion market trajectory, a significant "Trust Gap" persists. Moving from Read-Only to Read-Write increases the risk profile of every deployment. The obstacles are clear:

- Reliability: The risk of agents making errors in live production environments.

- Governance/Security: Managing permissions for an entity that can modify your systems.

- Cost/Complexity: The increased compute required for multi-step reasoning .

To bridge this gap, "Human-in-the-Loop" (HITL) remains a strategic necessity for high-risk operations. We are not yet at the stage where agents should have unfettered access to "delete" buttons without human oversight.

Conclusion: From Talking to Doing

The transition from 2025 to 2026 marks the end of AI as a novelty and the beginning of AI as a utility. While 2025 transformed how we talk to technology, 2026 is transforming how that technology works for us. By shifting human talent away from repetitive operational "loops" and toward high-level strategy, we are finally realizing the promise of the autonomous enterprise.

Common Questions

🤔

Frequently Asked Questions

What is the difference between a chatbot and an AI agent?▼

A chatbot is reactive and 'read-only,' meaning it waits for prompts and provides text based on training data. An AI agent is proactive and 'read-write,' meaning it can pursue objectives, use tools, and execute actions within software systems.

What is the ReAct framework in AI?▼

ReAct stands for Reasoning + Acting. It is a method where an AI agent interleaves reasoning (thinking about the problem) with acting (using tools like APIs or search) to solve complex tasks with higher accuracy and fewer hallucinations.

Why are multi-agent systems better than single AI models?▼

Multi-agent systems decompose complex tasks into smaller roles (like planning or reviewing). This specialization reduces errors, improves stability, and allows agents to check each other's work, leading to higher success rates in complex workflows.